… they have to be injected into somebody to have an effect. This is an obvious, common sense, undisputed fact. Why are so many people of influence and power (most disappointingly Gov. Gavin Newsom) acting like it’s not?

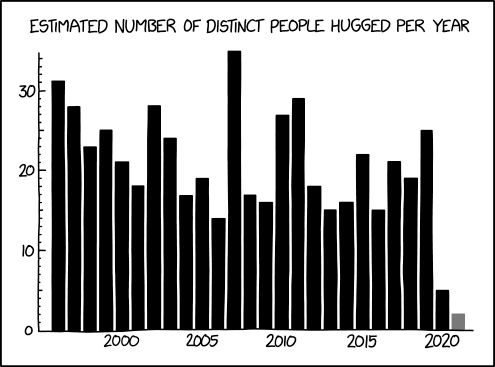

Today, in this age of online data, we have the advantage of having the actual numbers a click away. As of today, 31,161,075 doses have been distributed, of which 12,279,180 have been administered, leaving 18,881,895 doses chilling out in the freezers. Despite all the public clamor to administer the vaccines, 60% of the doses made for US use so far are still just sitting there in inventory, despite us being over a month into the rollout.

And over at the New York Times, they deem it it important enough to extend their morning newsletter to … try and convince people that they should go get vaccinated:

Right now, public discussion of the vaccines is full of warnings about their limitations: They’re not 100 percent effective. Even vaccinated people may be able to spread the virus. And people shouldn’t change their behavior once they get their shots. These warnings have a basis in truth, just as it’s true that masks are imperfect. But the sum total of the warnings is misleading, as I heard from multiple doctors and epidemiologists last week. “It’s driving me a little bit crazy,” Dr. Ashish Jha, dean of the Brown School of Public Health, told me. “We’re underselling the vaccine,” Dr. Aaron Richterman, an infectious-disease specialist at the University of Pennsylvania, said. “It’s going to save your life — that’s where the emphasis has to be right now,” Dr. Peter Hotez of the Baylor College of Medicine said.

No, it can’t save my life if you won’t let me get it. If everybody in the US was offered the vaccine right here, right now, you’d have 200 million takers, maybe even 250 million. Getting people to want the vaccine is not the problem right now!

Professionals at Pfizer, Moderna, and BioNTech did incredible, amazing, invaluable work, developing astoundingly effective vaccines in record time, and their work will save millions of lives. And it’s being wasted by political-driven delays when every day that vaccination is postponed another 4,000 Americans die needlessly. I can only imagine how infuriating this deadly government obstruction must be to the vaccine developers, hearing about thousands, then tens of thousands of American lives that could have been saved if only all 30 million doses they made so far had been administered right away.

The corrosive effects of the lies from the government officials in Sacramento and DC are despicable and deserve discussion and long-overdue corrective action, but that their “plan” was “do no planning and let the insurance companies, medical facilities, and county health departments deal with it on their own” is deplorable, and that they’re still refusing to get those vaccine doses off the shelves and into people’s arms is unconscionable.

![[Available vaccines are unavailable]](https://www.mandelson.org/cvs-sm.png)