Ubuntu Eee: Not ready for prime-time

On Sunday I bought myself a new toy, an Asus Eee 900. The general opinion on the ’net seemed to be that the default Xandros Linux installed OS was inferior to Ubuntu Eee (note not a Canonical-endorsed project), so I installed it. I’m going to restrict my discussion to the software (you can find a lot written about Asus Eees themselves on the net).

The most serious problem by far is that WiFi was broken out of the box, due to faulty interaction of the wifi-on/-off and wifi-toggle ACPI event handlers. I found plenty of people complaining about this issue and several fixes by basic searching, but it’s utterly astounding to me that an OS distribution targeting a portable computer where WiFi is essential for its use could have this bug in release. The Eee exists to be a box accessing everything through wireless, no WiFi is a “game over, you lose”-level bug. It was also made more frustrating by the various networking tools not even showing wireless as an option unless it was already on. Better would be to show the wireless interface as there and down (as it was) and let the user select it to bring it up (which still wouldn’t have worked, but at least you’re not making them waste their time figuring out why it’s not there at all).

There were some issues that popped up earlier, even before the first boot in the install app. The select keyboard layout section has a text area where you can test typing, but it uses the current keyboard layout instead of the new one you’ve selected in the tool, uselessly. (The change keyboard layout tool once you’ve booted into the OS has the same problem.) The time of day shown when you’re selecting the local timezone is wrong (and it says you can set it to the right time after boot), though after booting it gets the time of day correct.

After getting wireless working, the update manager said updates were available, so I let it install them. It got halfway through, then the laptop suspended itself because it was idle. When I un-suspended it, WiFi and X didn’t come back up and asusosd was busying the CPU. Restarting X brought up a login window on virtual console 9 (only discovered by seeing X running on tty9 in ps), but I had to reboot to get WiFi back so the update could finish. (The Eee seems to resume fine when it’s genuinely idle at suspend.)

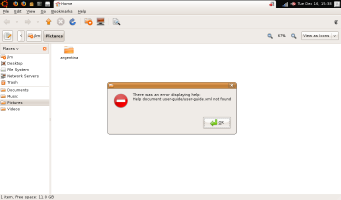

I copied the photos from my recent vacation onto its SSD, and went to view them in the file viewer you get from the “Pictures” folder. This brought up Eye of Gnome, and as my camera takes more pixels than the Eee’s display has, you have to scroll. Unfortunately, EOG doesn’t scroll when you make the normal scroll touchpad gestures, but instead changes zoom level. Also unfortunately, EOG doesn’t scroll when you press the arrow keys, but instead switches to other pictures in the CWD. So, to scroll in EOG it seems you have to move the mouse cursor to the arrows on the scrollbar and hold the mouse button down, which is a pretty crummy thing to ask of the user of an Eee. Also unfortunately, if you go to the help menu in the file viewer to try and learn how to change the preferred app for pictures to one which doesn’t suck as hard, you get an error dialog saying there’s no help.  Also also unfortunately, the “Preferred Applications” tool doesn’t let you change associations for pictures, only for a small handful of apps: Web browser, mail reader, terminal, and “multimedia” (videos & music) player.

Also also unfortunately, the “Preferred Applications” tool doesn’t let you change associations for pictures, only for a small handful of apps: Web browser, mail reader, terminal, and “multimedia” (videos & music) player.

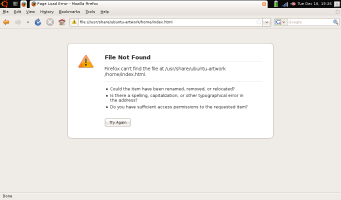

Moving on to more minor stuff, at night, I turned the display brightness down, but twice it switched itself back up to full brightness a few seconds later. (Third time turning down seemed to stick, but it happened again this morning and took four turn-downs.) It was getting hot, because it wasn’t turning the fan on. (After installing cpufrequtils, the fan would turn on.) The Firefox start page is set to a file which isn’t installed.  mtr needs root to run, and it’s not installed setuid.

mtr needs root to run, and it’s not installed setuid.

The Eee’s “House” key does nothing — naturally, it should bring you back to the home menu display. This would be convenient, as you have to mouse over to the Ubuntu logo on the top left and click it to get there now, and the other top-left icons are available by cycling through Alt-Tab. There are often spurious mouse clicks generated by the touchpad when I’m trying to fine-position the mouse and it thinks I’ve tapped (the mouse should never require fine-positioning, but that seems to be a lost battle), and at the same time actual taps are often misinterpreted as short moves.

I’m not the first to run into these problems, they all seem to have been reported already, but that I’ve run into so many usability and functionality problems in so short a time means that Ubuntu Eee is clearly not ready for mainstream use. A sophisticated user can get around them (X didn’t come back after un-suspend? Alt-F1, login, start new X, ps, ah it’s on tty9, Alt-F9. — mtr can’t get raw socket? sudo chmod u+s =mtr) but it’s too much to expect from non-geeks, and falls well short of the goal of “it just works”. I’m optimistic about the future — hopefully Ubuntu Eee won’t make a release with such a severe bug ever again, and suspend/resume is getting a lot of work on it in the kernel right now, and a lot of this stuff just needs easy fixes (like putting the help files and start page where they’re looked for (Eye of Gnome OTOH has sucked for years and should just be taken out back and shot)). And it’s a pretty nice experience for me as a “power user”, once I’ve patched the wireless and gotten the big initial update complete and got my image viewer set to ImageMagick…, and I can accept the brightness resetting and suspend problems and the “House” key not working for a few more update cycles. But it’ll be a while still before the dreamed-of shiny usable Linux laptop arrives.

![[Diagram]](/img/archi5.png)

![[Chart]](/img/above-in3.png)